Generic large language models are impressive, but they do not know your business. They have not read your handbook, your case files, or the literature your practitioners trust. A Retrieval-Augmented Generation (RAG) system changes that: the model is grounded in a curated knowledge base you own, so answers are traceable to sources you control and the output stays accurate over time.

I design and build production RAG systems and AI-assisted mobile applications — from the knowledge pipeline and the embedding strategy, through the conversational layer, to the infrastructure that keeps it private, compliant, and cheap to run.

What I build

- Domain-grounded conversational agents — the AI retrieves from a knowledge base you own, not from the open internet.

- Persistent user memory — sessions and long-running context, so the agent remembers what a user has shared across conversations.

- Tiered model orchestration — faster, cheaper models for anonymous or low-volume traffic; larger models for paying users and complex queries.

- Evaluation harnesses — so retrieval-quality regressions are caught before users are.

- Rapid AI-assisted mobile MVPs — idea to store listing in weeks, not quarters.

- Multilingual architecture — built from the first line of code to scale into additional languages without a rewrite.

Reference project — Sedno

Sedno is a mobile wellness application I am currently working on. It helps users explore the emotional and psychological roots of physical symptoms through a conversational AI agent grounded in Total Biology literature.

Sedno is representative of the stack I use for RAG-enabled mobile products:

- Mobile client — Flutter, shared across Android and iOS for a single codebase and a consistent experience.

- Back-end — ASP.NET Core REST API, hosted in the EU (Frankfurt) for GDPR compliance.

- Knowledge retrieval — pgvector on PostgreSQL, with Ollama embeddings seeded from curated domain literature.

- Language models — Anthropic Claude, tiered by user plan: Haiku 3 for anonymous traffic, Haiku 4.5 for registered free users, Sonnet 4.5 for premium subscribers.

- Billing — RevenueCat for Apple App Store and Google Play subscriptions.

The result is an AI that answers in warm, accessible Polish, grounded in the client’s own knowledge base, remembering context across sessions, and available at 2 a.m. when a book or a practitioner cannot be.

Sedno has been approved by the Apple App Store and Google Play; public release is imminent.

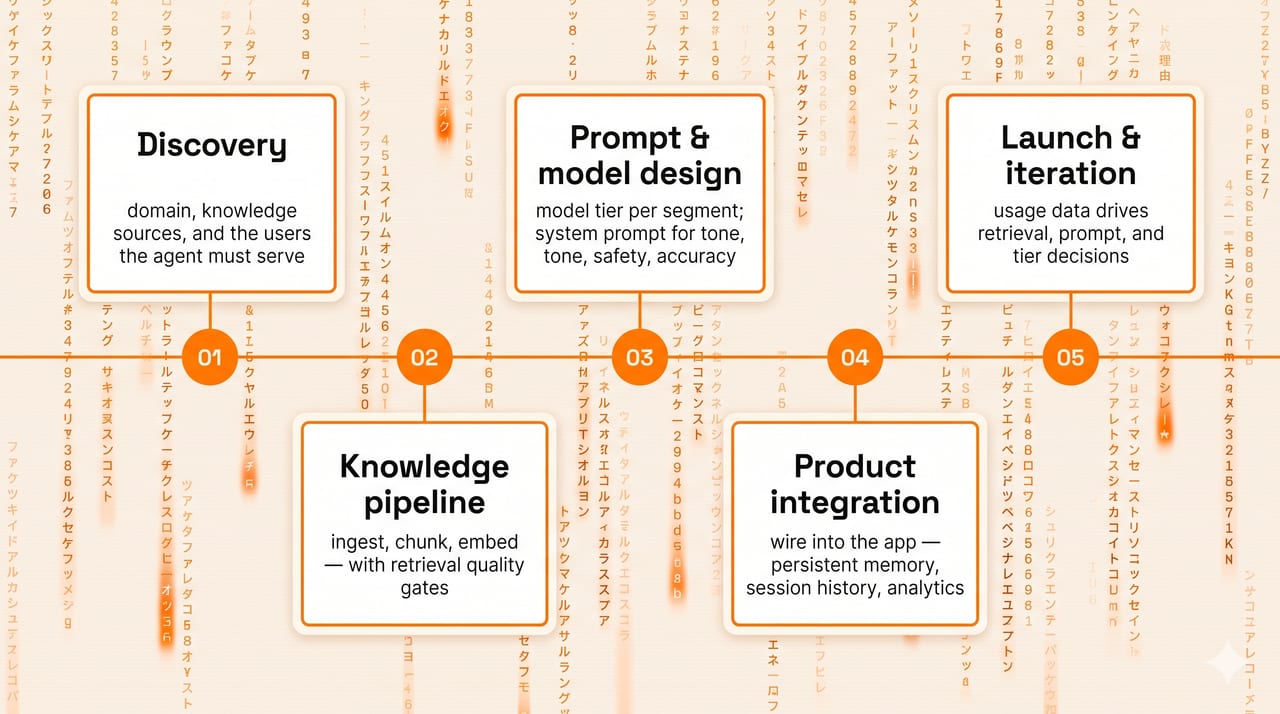

How an engagement works

- Discovery — understand the domain, the knowledge sources, and the users the agent needs to serve.

- Knowledge pipeline — ingest, chunk, and embed the source content; define retrieval quality gates.

- Prompt and model design — choose the right model tier for each user segment; design the system prompt for tone, safety, and accuracy.

- Product integration — wire the agent into the application (mobile, web, or internal tool) with persistent memory, session history, and analytics.

- Launch and iteration — usage data drives retrieval quality, prompt tuning, and model-tier decisions after go-live.

When the thing ships, it needs to stay accurate and cheap to run. I build the pipelines and the guardrails that make that true.

Related services

- Fractional CTO — senior leadership for the broader product

- .NET and C# — the ASP.NET Core back-end that often sits behind these systems

- Drupal consultancy